In a recent interview with Jon Lovett, host of Lovett or Leave It, journalist and podcast host Kara Swisher discussed her new series: Kara Swisher Wants to Live Forever. In the six-part CNN Original Series, Swisher explores the “booming longevity industry, investigating anti-aging treatments, biotech, and Silicon Valley’s influence on extending human life.” She recently had an opportunity to speak with a 3D hologram of herself while exploring the Digital Afterlife Industry.

Swisher told Lovett a story about the tech company that created an AI avatar that looked, behaved, and sounded just like Swisher. She nicknamed the AI hologram “in a box” her “Kara-tar.”

The tech journalist describes the conversation “with herself” in the following clip from that interview:

The box, marketed by its makers as a “holobox,” projected a life-sized 3D image of a person who looked like her, sounded like her, and answered her questions in her own voice. The longer she talked to it, the more it learned.

That technology already exists in dozens of museums, corporate trade shows, and tourist attractions around the world. You can chat with a holographic Isaac Newton in London. A holographic Leonardo da Vinci appeared at a tech conference in Paris last May.

The Naval Aviation Museum in Pensacola has holographic Navy sailors who answer visitors’ questions about life on an aircraft carrier. Purdue University recently installed holographic versions of its most famous alumni, including Amelia Earhart and Neil Armstrong, on a permanent campus display.

Ailias: Holograms of Historical Figures

Ailias is one of the companies developing holograms of historical figures that people can interact with. They are currently used in museums to educate visitors and answer questions.

That’s the version of this technology people see at conferences and exhibits. There’s another version, growing faster, with almost no public oversight. It’s called the digital afterlife industry, and its product is your dead relatives.

What’s actually Being Sold

Companies in the digital afterlife space — HereAfter AI, StoryFile, Project December, Seance AI, re;memory, China’s Super Brain, Nanjing Silicon Intelligence — sell what researchers and ethicists now call griefbots, deadbots, or postmortem avatars. The products vary in form. Some are text chatbots. Some are voice systems that sound like the deceased. The most advanced are video or holographic avatars that appear to look at you, blink, smile, and speak in real time.

Video of a Digital Human Which Looks and Sounds Like a Deceased Family Member (Created by re;memory)

The underlying technology used by companies in the digital afterlife industry is the same large language model (LLM) architecture that powers ChatGPT, but trained on a specific person’s data.

To create an avatar of your mother, the company ingests whatever you can give them: text messages, voicemails, social media posts, recorded interviews, photographs, home videos, and emails.

The model learns her speech patterns, vocabulary, opinions, and the way she ended phone calls. Then it generates new conversations in her style.

Pricing varies. HereAfter AI sells subscription tiers from $3.99 to $7.99 per month, with one-time options up to $199. Project December charges roughly $10 per simulated conversation. Premium services in the United States can run setup fees of $15,000.

In China, customized griefbots from Super Brain reportedly cost between $6,860 and $13,710. South Korea’s re;memory has built avatars from as little as a 10-second voice clip and a handful of photos.

The Digital Afterlife Industry in China

You can also commission an avatar of yourself, before you die, for your family to use afterward.

The Consent Problem Nobody is Solving

The most basic ethical question about this industry has no legal answer in the United States: Who has the right to turn a dead person into a chatbot?

If you commission an avatar of yourself while you’re alive, you’ve consented. But what about the avatar your daughter creates of you after you’re gone, using texts you sent her, voicemails you left, photos posted on Facebook?

You never agreed to be reanimated. Neither did the people you texted with — your sister, your friends, your ex — whose private conversations are now being uploaded to a commercial AI company to train a model that mimics you.

The fact that there are no regulations or oversight prompted the digital afterlife company, Ailias, to create its own set of policies. Their ethics policy is on an “Ailias AI Ethics and Responsible Use” page.

Here is the introduction to their ethics page:

At Ailias, we believe that creating lifelike, conversational digital humans carries a profound responsibility. Our technology brings historical figures, cultural icons, and approved modern personalities to life through interactive holograms — experiences that inspire, educate, and engage audiences in public, commercial, and educational environments.

With this capability comes a duty to act ethically, transparently, and responsibly. This page outlines Ailias’ approach to AI ethics and responsible use, covering how we design, govern, and deploy digital humans and conversational holograms responsibly.”

Researchers Tomasz Hollanek and Katarzyna Nowaczyk-Basińska at Cambridge University’s Leverhulme Centre for the Future of Intelligence have called for what they describe as a principle of mutual consent.

Both the person being recreated and the person who will interact with the avatar should have to agree, in advance, before any avatar is built. Right now, neither is required. A grieving spouse can build an avatar of their dead partner without that partner ever having agreed to it, and adult children may find themselves talking to a digital version of a parent they never wanted to summon.

The data exposure goes further. Once you hand over your mother’s texts, voicemails, and photos to a private company, the company has them. Most terms of service in this industry give the platform broad rights to use that data to improve their models.

The Hastings Center for Bioethics has flagged the obvious risk: the data your dead relative shared in private with you, in conversations she never expected to be commercialized, is now training a corporate AI product. So is everything anyone ever wrote to her.

A Grief Market With Subscription Pricing

The most uncomfortable part of this industry is its business model.

Funeral homes charge once. Headstones don’t have monthly fees. Most griefbot companies, by contrast, run on subscriptions or per-session billing. The longer you keep talking to the digital version of your dead mother, the more revenue the platform earns. The financial incentive runs in exactly the wrong direction for healthy mourning.

Researchers studying this have raised the alarm. A 2022 paper in Science and Engineering Ethics by Nora Freya Lindemann argued that griefbots may interfere with grief rather than ease it, by allowing users to avoid the painful work of accepting loss.

Louise Richardson, a philosopher at the University of York, told The Guardian that ongoing conversations with a chatbot of the dead “can get in the way of recognizing and accommodating what has been lost.” The Hastings Center has warned that subscription pricing, applied to a vulnerable population in acute grief, creates conditions ripe for exploitation.

There is also the question of what these systems are quietly collecting on the living people who use them. When you tell your AI grandmother about a struggle in your marriage, that conversation is logged.

Your interests, anxieties, vulnerabilities, and consumer preferences are now data the company holds. Nothing in current US law prevents that data from being sold to advertisers, used to train other models, or repurposed in ways the grieving user never anticipated.

And what happens when these companies fail? StoryFile, one of the best-known names in the industry, filed for Chapter 11 bankruptcy protection, reportedly owing about $4.5 million to creditors.

The company has said it is reorganizing and building a “fail-safe” so families can retrieve their materials if it folds. That promise depends on StoryFile’s solvency.

Other companies in the space have offered no such commitment. When a digital afterlife company shuts down, the avatars vanish — and so does the data, sometimes into the assets sold off in bankruptcy proceedings.

The Historical Figure Problem

The museum version of this technology raises a different concern, less commercial but more politically loaded.

A holographic, conversational version of a historical figure is not a recording. It is a generative system that produces new speech, in that person’s voice and likeness, based on a curated training dataset.

Digital Human Gallery: Historical Figures by Ailias

See more historical figures, other digital humans on the company’s website.

Someone decides what data goes in. Someone decides what topics the avatar will and won’t engage. Someone writes the system prompts that shape its responses.

Ailias’ inteoduction to the historical digital human section of their website:

“We create life-size conversational 3D human holograms that bring iconic figures to life through natural, real-time interaction. Each hologram is full-body, photorealistic, interactive and designed to talk, teach and engage audiences in live environments.

Ailias can create photoreal digital humans—from brand ambassadors and subject-matter experts to recognisable personalities (subject to IP approval)—as well as deploy licensed characters from our existing collection — all powered by low-latency AI for seamless, responsive conversations.”

Frederick Douglass did not say what a corporately licensed holographic Frederick Douglass will say to a fifth-grade class. Neither did Harriet Tubman, Martin Luther King Jr., Cesar Chavez, or any other historical figure whose voice can now be synthesized and whose face can now be projected.

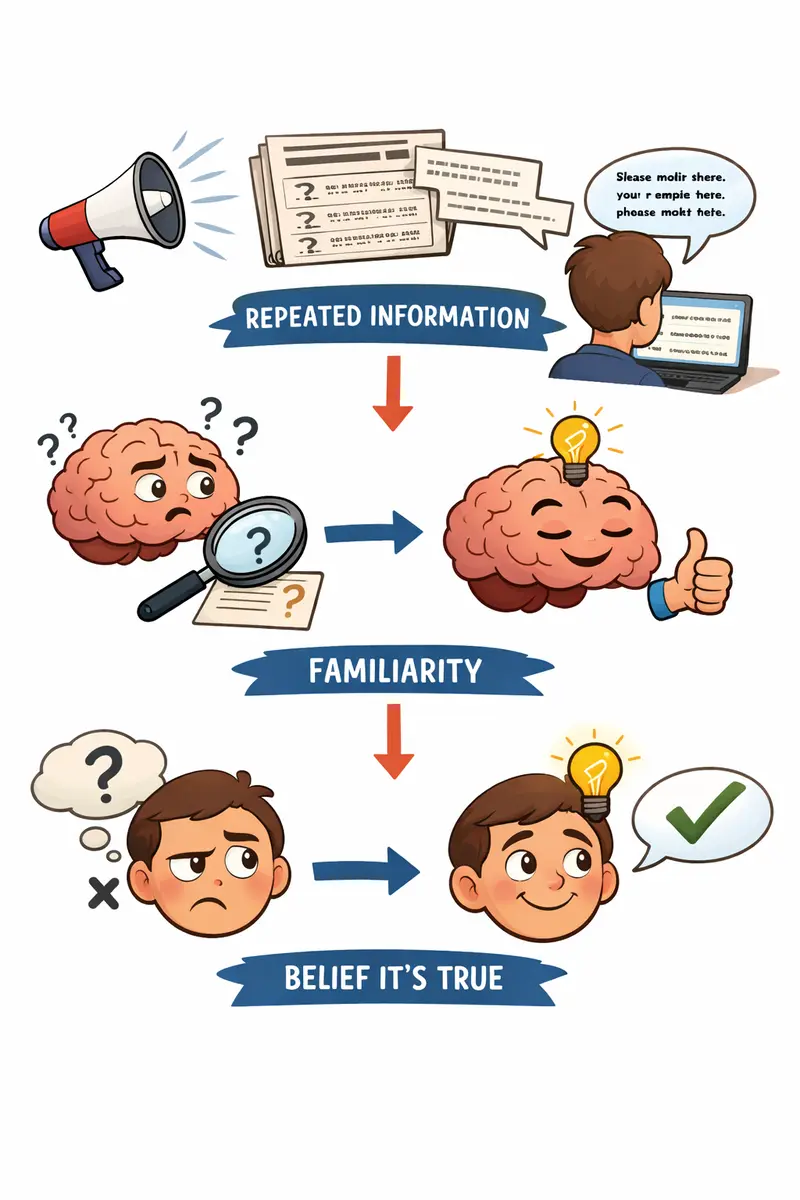

The avatar’s answers depend entirely on what its operators chose to feed it and what guardrails they imposed. Visitors will remember those answers as if the actual person spoke them.

The illusory truth effect — the well-documented tendency for people to accept information as true the more vividly and repeatedly it’s presented — applies here at a scale historians have not had to confront before.

A schoolchild who sees a life-sized, three-dimensional, conversationally responsive Abraham Lincoln tell them what he thought about a contemporary issue is not going to remember the disclaimer printed beside the exhibit. They are going to remember Lincoln saying it.

The technology is ideologically neutral. The deployment never is. Whoever controls the script controls the message, and the message arrives wearing the face of someone who can no longer object.

The Regulatory Vacuum

There is no federal law in the United States governing the creation, sale, or operation of postmortem AI avatars. There is no requirement that the dead person consented to be recreated. There is no requirement that the data used to build the avatar be obtained legally or ethically.

There is no requirement that the company disclose how it collects, retains, or sells user interactions. There is no requirement that the avatar be retired when the family stops paying, or when the company is sold, or when the technology is repurposed.

A few states have postmortem right-of-publicity laws that limit commercial use of a dead person’s likeness, but those statutes were written for film and advertising, not for AI systems that generate new speech. Most courts have not yet been asked to apply them to this industry. Until that happens, the entire framework rests on company terms of service — agreements signed by grieving customers in the worst week of their lives.

China requires that people whose biometric data is used to create a deepfake be notified and consent, though enforcement is uncertain. The European Union’s AI Act includes some transparency provisions that may apply.

The United States has nothing.

This is not a future story. The industry is here. It has paying customers, venture funding, and bankruptcy filings. It has institutional partnerships with museums, universities, and corporations. It has a business model designed around prolonged engagement with people who are grieving. And it operates almost entirely outside any regulation that was written with this technology in mind.

A society that has not decided whether the dead can consent, whether the bereaved can be billed by the minute to talk to them, or whether a corporation should own a digital copy of your grandmother is a society that has already let the market decide for it.