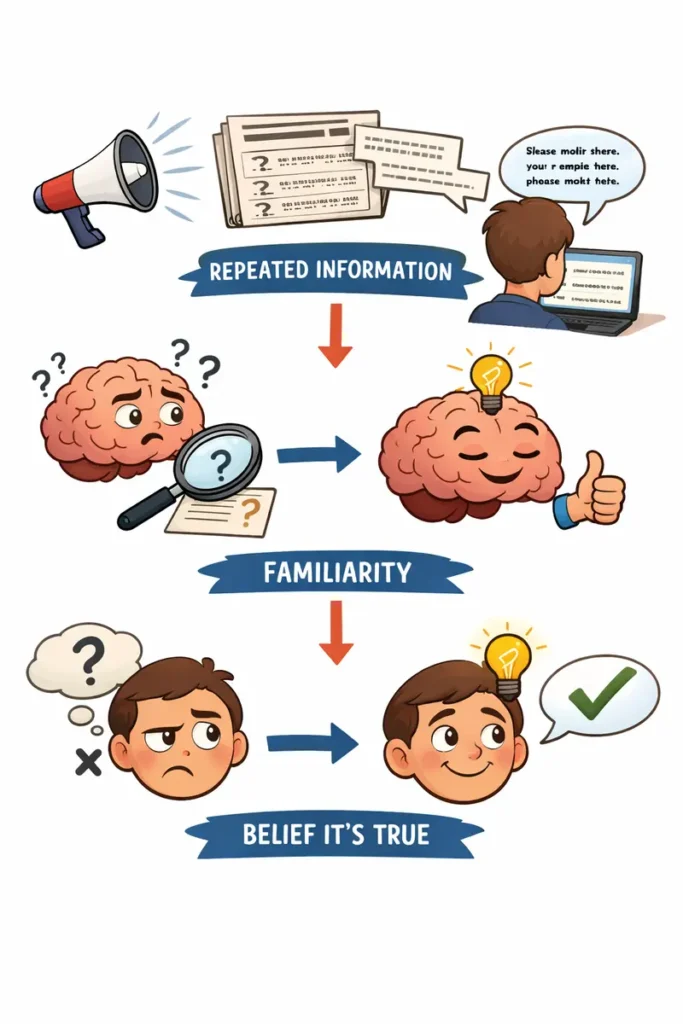

There’s a reason you can’t shake the feeling that something you’ve heard a dozen times must be true — even when a small voice in the back of your head says otherwise. That instinct has a name: the illusory truth effect. And right now, it’s being weaponized against you.

The illusory truth effect is a cognitive bias in which people come to believe a statement is true simply because they’ve heard it repeated.

It doesn’t matter whether the claim is accurate, plausible, or even internally consistent. Repetition creates familiarity. Familiarity creates a feeling of ease. And that ease gets mistaken for truth.

Psychologists have known about this phenomenon since 1977, when researchers at Villanova University and Temple University ran a straightforward experiment.

They gave college students lists of plausible-sounding trivia statements — some true, some false — and asked them to rate how confident they were in each one. The catch: some of those statements appeared on every list, while others showed up only once.

Over the course of several weeks, participants’ confidence in the repeated statements climbed steadily, regardless of whether those statements were actually true. The statements they only encountered once? Their ratings never budged.

Nearly five decades of research since then has confirmed what that first study found — and made the picture considerably more alarming.

Your Brain Has a Shortcut Problem

Here’s why this happens. When your brain encounters a piece of information it has processed before, it processes that information more easily the second time around. Psychologists call this “processing fluency.” In everyday life, fluency and truth tend to correlate — things you’ve encountered before are often things that are real. Your brain learned this shortcut long before the internet existed.

The problem is that the shortcut doesn’t distinguish between information that’s familiar because it’s true and information that’s familiar because someone has been hammering it into your skull on purpose.

This is not a question of intelligence. Research consistently shows that the illusory truth effect operates independently of cognitive ability. Analytical thinkers are not immune.

People with advanced degrees are not immune. Even subject-matter experts — people who literally know better — are vulnerable, because they often fail to check incoming claims against what they already know.

Psychologists call this “knowledge neglect”: the tendency to let the feeling of familiarity override stored knowledge that could flag a claim as false.

A comprehensive meta-analysis published in Nature Communications in 2026, analyzing more than 150 empirical studies, confirmed that the effect holds across virtually every research context tested.

The researchers also found it intensifies when people are under stress, multitasking, or otherwise cognitively overloaded — in other words, exactly the conditions most Americans are under while scrolling their phones, watching the news, and trying to keep up with a relentless information environment.

Repetition as a Political Weapon

If you’re thinking this sounds tailor-made for political exploitation, you’re right.

The illusory truth effect has been a tool of propagandists for as long as propaganda has existed. But in the age of social media — with its built-in mechanisms for rapid, algorithmically amplified repetition — it has become something closer to a superweapon.

Consider how political messaging works in 2026. A false claim doesn’t need to convince anyone the first time it’s uttered. It just needs to be repeated.

Every rally speech, every social media post, every cable news segment, every retweet and reshare is another repetition. And every repetition nudges the claim slightly closer to feeling true in the minds of people exposed to it — even people who initially rejected it.

The strategy isn’t subtle. As former White House strategist Steve Bannon once explained, the approach is to “flood the zone” — to generate so many claims, so rapidly, that no single falsehood can be adequately scrutinized before a dozen more arrive to take its place.

This isn’t information; it’s a firehose. And researchers who study misinformation have documented exactly how effective it is.

Case Studies in Repetition

The examples from recent American politics could fill a textbook.

The “stolen” 2020 election

Despite being disproven through recounts, audits, court challenges, and the findings of Trump’s own attorney general, the claim that the 2020 presidential election was stolen has been repeated so relentlessly that large portions of the electorate still believe it.

The claim wasn’t supported by evidence the first time it was made. But repetition didn’t just keep it alive — it gave it the feeling of established fact.

Research published in Science Advances in 2025 specifically studied how election fraud misinformation erodes confidence in democratic institutions, finding that prebunking interventions were most effective among the people who had been most misinformed — a testament to how deeply repetition had embedded these false beliefs.

Inflation claims

Throughout 2025 and into 2026, President Trump has repeatedly claimed he “inherited the worst inflation in the history of our country.”

Multiple fact-checkers have documented this as false: the year-over-year inflation rate when Trump took office in January 2025 was 3.0%, far from the all-time record of 23.7% set in 1920, and the rate had already been falling sharply for over two years under the Biden administration.

But the claim gets repeated at nearly every rally, at every press conference, and in every social media post. Each repetition makes it feel slightly more like something everyone already knows.

The $18 trillion investment figure

Trump has repeatedly cited an “$18 trillion” investment figure as evidence of economic success, with no documentation or evidence supporting the number. Fact-checkers at CNN, PBS, and FactCheck.org have all flagged it as fabricated or grossly exaggerated.

Yet it has been repeated so frequently in prepared remarks and social media posts that it has taken on the veneer of a real statistic.

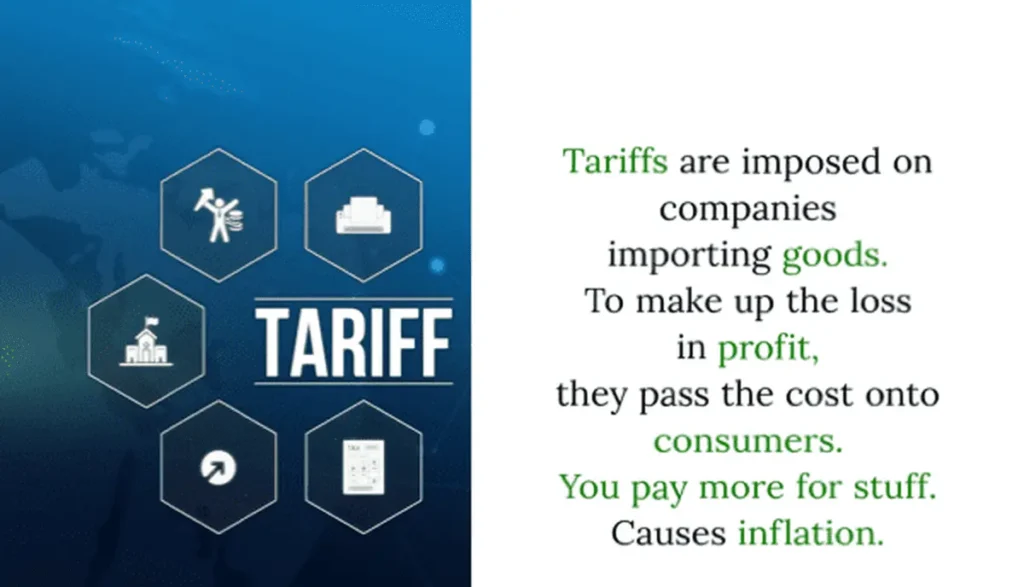

Tariffs are “paid by foreign countries.”

This claim — repeated in rallies, interviews, and even the 2026 State of the Union address — is flatly contradicted by how tariffs actually work.

Tariffs are paid by U.S. importers, and those costs are frequently passed on to American consumers. The Federal Reserve Bank of New York found in a February 2026 analysis that nearly 90% of the economic burden of recently imposed tariffs fell on U.S. businesses and consumers.

But the claim has been repeated so many times that correcting it feels like swimming against a current.

The birther conspiracy

Perhaps the most thoroughly documented example of the illusory truth effect in US politics is the conspiracy theory that former President Barack Obama was not born in the United States. It was debunked conclusively when Obama released his long-form birth certificate.

birth-certificate-long-formIt didn’t matter. Years of repetition on fringe websites, talk radio, and social media had already hardened the lie into something millions of people accepted as common knowledge.

Each of these examples follows the same pattern: a claim is made, it is debunked, and it is repeated anyway — because repetition, not evidence, is the mechanism that makes it stick.

The White House Strategy: “President Trump Is Right”

The institutional machinery surrounding these repeated falsehoods is itself noteworthy. CNN reported in March 2026 that the White House has adopted a standard response when journalists fact-check the president’s claims: “President Trump is right.”

The response is deployed regardless of the specific claim being challenged, and it rarely includes evidence to support the assertion. Instead, it pivots to a broader ideological narrative.

This is the illusory truth effect being used at the level of government communications. The goal isn’t to win the argument — it’s to ensure the claim gets one more repetition.

The Paradox of Correction

Here’s the part that should keep you up at night: correcting a lie requires repeating it.

This is the central paradox of fighting the illusory truth effect. Every time a journalist writes “Trump falsely claimed that…” or a fact-checker publishes a detailed debunking, the false claim gets one more airing. The correction is necessary. But the repetition embedded in the correction works against the corrector.

CNN fact-checker Daniel Dale has described this dynamic directly: news outlets may initially check a false claim, but they are unlikely to keep pointing out it’s false as it gets repeated over weeks and months, “especially because he is constantly mixing in dozens of new lies that require time and resources to address.”

The sheer volume of falsehoods exhausts the capacity of fact-checkers and news consumers alike.

Fighting Back: Truth Sandwiches and Prebunking

Researchers haven’t given up. Two countermeasures have shown real promise.

The first is what cognitive linguist George Lakoff calls a “truth sandwich.” The technique is simple: lead with the truth, briefly mention the falsehood, then return to the truth. The idea is to capitalize on how memory works — people remember beginnings and endings more than middles. By bookending a correction with accurate information, you minimize the amount of airtime the lie gets inside someone’s head.

An example of the “truth sandwich” theory at work is the claim that President Trump always remembers (and his opinion is based on) whatever he heard from the last person he spoke with.

The second, more ambitious approach is called prebunking, or psychological inoculation. Just as a medical vaccine exposes your immune system to a weakened pathogen so it can recognize the real thing, prebunking exposes people to weakened examples of misinformation tactics — emotional manipulation, fake experts, scapegoating, conspiracy thinking — so they can recognize those tactics when they encounter them in the wild.

The research behind prebunking is substantial and growing. A 2026 meta-analysis of 33 inoculation experiments involving more than 37,000 participants found that prebunking consistently improves people’s ability to distinguish reliable information from manipulative content — without making them more cynical or distrustful of information in general.

Google’s research division, Jigsaw, has rolled out prebunking videos to tens of millions of people via YouTube. Before the 2024 EU elections, a prebunking campaign reached over 120 million YouTube users across 12 countries and measurably improved viewers’ ability to spot manipulation.

Games like Bad News and Go Viral! put players in the role of a propagandist, teaching them the playbook from the inside. Research shows that just five to fifteen minutes of play can reduce susceptibility to misinformation for up to three months.

What You Can Do

Understanding the illusory truth effect is itself a form of inoculation. Once you know how the trick works, it’s harder to fall for it — though not impossible, because the effect operates below the level of conscious reasoning.

Here’s a practical approach: when you encounter a claim that feels true, pause and ask yourself why it feels true. Is it because you’ve seen evidence for it? Or is it because you’ve simply heard it before?

That moment of reflection — what researchers call “accuracy prompting” — can interrupt the automatic process by which familiarity gets mistaken for truth.

Seek out original sources. When a politician cites a statistic, look for the underlying data. When a social media post makes a dramatic claim, check whether credible news organizations have verified it. The University of Cambridge even offers a free two-minute quiz that can help you test your own susceptibility to misinformation.

None of this is easy, and none of it is foolproof. The illusory truth effect is a feature of how human brains work, not a bug that can be patched. But recognizing the machinery of manipulation is the first step toward refusing to be manipulated by it.

In a political environment where the strategy is to repeat lies until they feel like common sense, the most radical thing you can do is slow down and think.